Agile software development is a widely adopted methodology that emphasizes flexibility, collaboration, and iterative progress. As software engineers strive to optimize their workflow, the use of ChatGPT can offer numerous benefits to enhance their agility. However, it is important to be mindful of potential risks that could hinder the agile process. Let’s explore both the advantages and potential pitfalls of incorporating ChatGPT into agile software development.

Ways ChatGPT Could Help

There are some ways ChatGPT could help software engineers be more Agile:

-

-

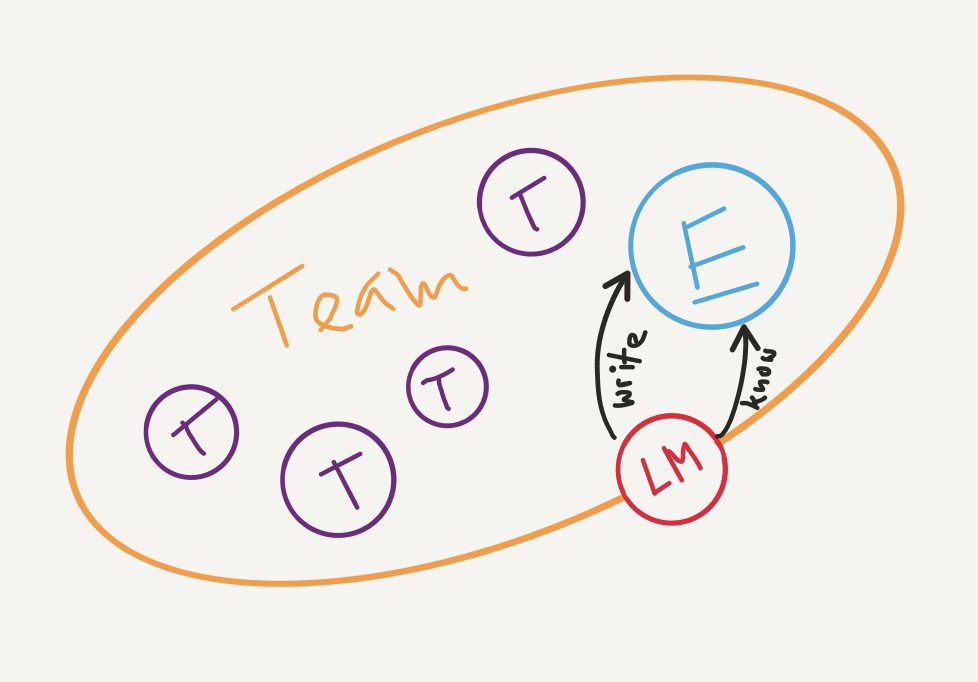

- Improved Communication: Effective communication is a cornerstone of agile development, and ChatGPT can facilitate this by providing real-time feedback and suggestions during team discussions. It can analyze code snippets, offer syntax recommendations, and even assist in identifying potential vulnerabilities. This could streamline the development process and fostering collaboration among team members.

-

- Rapid Prototyping: ChatGPT can aid in generating code snippets and prototypes, which can accelerate the development of minimum viable products (MVPs). This allows software engineers to quickly iterate and gather feedback from stakeholders. Doing so could lead to faster decision-making and validation of ideas, a core principle of agile development.

- Enhanced Documentation: Documentation is vital in Agile development to maintain transparency and knowledge sharing among team members. ChatGPT can assist in generating documentation templates, code comments, and other technical write-ups, saving valuable time and effort, and ensuring that project documentation remains up-to-date and accessible to all team members.

-

But… where there is light there is shadow.

Dangers to Agile Development with ChatGPT

A few points com to mind, when we want to highlight the risks:

-

-

- Over-reliance on Automation: Relying solely on ChatGPT for code generation and suggestions can potentially lead to a decrease in critical thinking and creativity among software engineers. Agile development values the human element, and excessive reliance on automation could result in a loss of innovation and diversity of ideas, which are essential for creating robust and unique solutions.

-

- Quality Control Challenges: While ChatGPT can provide suggestions for code snippets, it may not always guarantee the best practices or optimal solutions. If not thoroughly reviewed and validated, code generated by ChatGPT could potentially introduce bugs, security vulnerabilities, or violate coding standards. This may result in increased technical debt and rework, contradicting the principles of Agile development.

- Ethical Considerations: As a language model, ChatGPT can potentially generate biased or unethical code, especially when trained on biased data. This could result in unintentional propagation of bias or unethical practices in the software being developed, which could have ethical, legal, and reputational consequences, posing a risk to the integrity and fairness of Agile development.

-

Mitigating the Risks

There are though some things we can do to mitigate the risks:

-

-

- Strike a Balance: Software engineers should strike a balance between utilizing ChatGPT as a tool for assistance and leveraging their own expertise and creativity. It is essential to maintain critical thinking, innovation, and human decision-making in the agile development process.

-

- Validate Generated Code: Code generated by ChatGPT should be thoroughly reviewed, validated, and tested to ensure it adheres to coding standards, follows best practices, and meets quality requirements. This includes thorough testing, code reviews, and validation against project specifications to identify and fix any potential issues before deploying the code.

- Ethical Considerations: Be mindful of the ethical implications of using ChatGPT in software development. Training data should be diverse, inclusive, and free from bias. Review the code generated by ChatGPT for any potential biases or unethical practices, and take necessary steps to mitigate them. It’s important to have a strong ethical framework and guidelines in place when incorporating ChatGPT into the Agile development process.

-

An Experiment

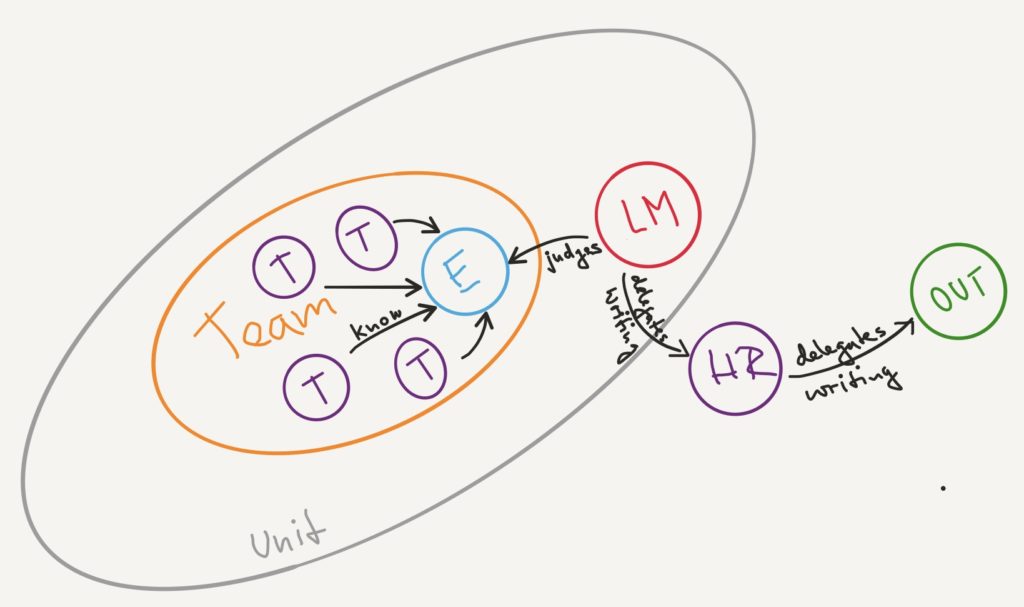

My personal (admittedly still limited) experience using ChatGPT to generate and explain code confirms the risks associated using the tool (at least in its current state).

Actually, when using it intensely and double checking its output, one can still see its limits.

The code it generates looks mostly clean, but it does not follow a specific coding style. Actually the temperature setting of the ChatGPT dialogue engine is set to give “creative” answers. So you will get different variants on how you could implement an idea. If you have no personal idea on how a solution should look like you can go with any proposed code. But if you try to stick to a specific coding style, you oftentimes find yourself second-guessing what it proposes.

How far ChatGPT’s understanding of the code goes can be easily challenged doing these few steps:

-

-

- Let it generate some code (for a problem domain, you might be familiar with).

→ I let it generate code to calculate the distance between two planets at any point in time.

- Let it generate some code (for a problem domain, you might be familiar with).

-

- It usually gives a verbal explanation on what the code does.

→ It explained to me, that it was using a Euclidean formula to calculate the distance.

- It usually gives a verbal explanation on what the code does.

-

- Then modify the generated code slightly (like a programmer would do, when moving it to an IDE and evolving it over time).

→ I just changed the calculation of the Euclidean formula slightly.

- Then modify the generated code slightly (like a programmer would do, when moving it to an IDE and evolving it over time).

- Paste the modified version of the code back into ChatGPT and ask it to explain the code.

→ ChatGPT explained to me that the code used the Euclidean formula. But this could not be true, as I changed the formula to be invalid.

-

Conclusion

Just this little experiment shows the danger of relying too much on ChatGPT’s help, at least in its current state. There actually is no reasoning behind what it spits out. Unfortunately for many people that do not take its output with a grain of salt its convincing authoritative tone will produce lots of non valid output.

So yes, validating, testing and questioning its output is essential. We as developers need to step up our game, by knowing what the expected results should be, before asking ChatGPT. So Agile development skills will be in high demand more than ever before.

In the coming weeks I will have a look at various practices from Agile software development (like test automation, evolutionary software design, etc. ) and how they are impacted by the use of artificial intelligence tools.

If you are interested in improving your Agile implementation, then check out my upcoming book.

Note: My experiments were done using ChatGPT version 3.5. As soon as I can get my hands on ChatGPT 4 I will do a follow-up to this post.